This post is NOT about underwater imaging. With the lockdown most of us have started using their cameras in the garden to shoot bugs, or birds or family members or abstracts.

In my instagram on the side you can see some examples of what I have been up to.

Shooting underwater is typically done at small apertures because of underwater optics issues. It is rare to shoot wide angle wider than f/5.6 on a MFT body or F/11 on full frame.

On land everything changes and you want to have as much light as possible coming into your camera to maximise dynamic range, bring out colours and minimised noise. Aperture controls not just how much light hits the sensor but also depth of field or I should say depth of focus.

Depth of field at equal level of magnification (size of the subject relative to the frame) depends only on the aperture of the lens. It does not matter if the lens is short or long once the subject fill your frame it is the f/number that influences depth of field.

2.8/2/1.4 is the Magic Number

Typically in full frame terms f/2.8 was a good lens, and the reason is quite simple if you shoot a classic 50mm lens from 1.5 meters away you will have 15 cm or half a foot depth of field. This is ideal to keep things in focus but also provide some background separation as objects blur as they move away from the area in focus. If you had a faster lens more light would go in the frame however you risk that nothing is in focus, for example nose and eye in focus and maybe ears not in focus.

And this is why 2.8 has been the magic number for full frame photography. If we move to an APSC sensor this becomes 2 and on MFT the magic number is 1.4. So 1.4 on a 25mm lens on MFT is equivalent to 2.8 on 50mm on full frame.

1.4 also gives plenty of light to your sensor so when you want to do some street photography or filming on MFT you can keep your ISO very low.

Exposure Value

Every scene has a level of illumination given in LUX and your camera needs to be able to expose for it with the right focus, with the required motion blur and lowest noise.

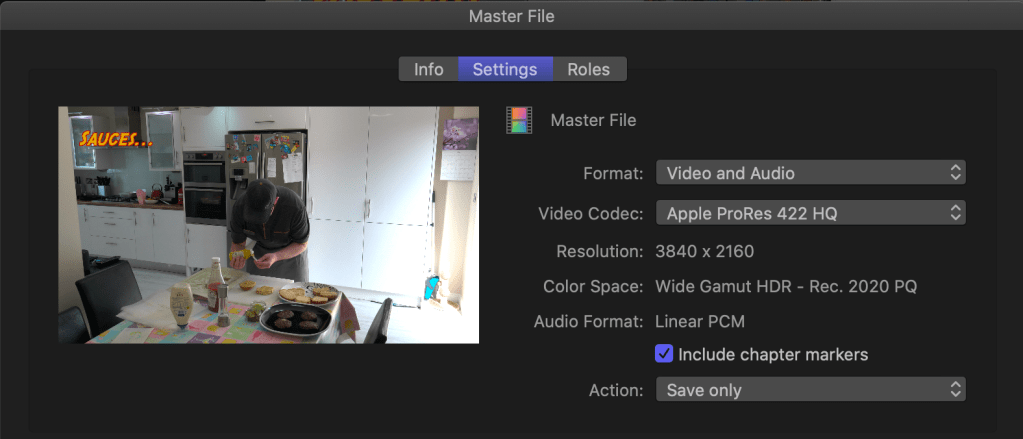

The scene in the image above is shot at f/1.4 1/60 ISO 640 let’s calculate the Ev taking into account the reference value is f/1 1 second and ISO 100.

1.4 means 1 stop 1/60 means 5.9 stops and 640 means 2.67 stops. So in total we have 6.9 stops of light taken away from aperture and shutter and 2.67 stops added by ISO gain. Total of 4.22 Ev using the formula Lux = 2.5 2^Ev we get 47 Lux which is the level of illumination of your living room in the evening with artificial lights.

If you had a slower lens like for example 2.8 to cover the same scene you needed to shoot at ISO 2500 this would have increased the noise, reduced the dynamic range and the colors.

2.8 Zooms are for outdoor

There are a number of great lenses for MFT cameras that are midrange zoom and have outstanding optical quality:

The lenses above are constant aperture and weather sealed they are ideal for outdoor use however they do not offer a shallow depth of field for subject isolation as they really are f/5.6 in full frame equivalent and they are also slow meaning they will take you to the ISO 2500 zone if you try street photography or shooting movies in your living room.

Prime Rules

If you want fast lenses in MFT you need to have prime lenses, this is due to the physical constraint of the format.

Here my selection, I am not a fan of vintage lenses or full manual lenses, I like the best optical quality and if I want to add a vintage feel I do it in post.

In more detail:

The Panasonic 12mm 1.4 is an expensive lens that I use for astrophotography and gimbals plus low light narrow room indoor shots.

It is weather sealed, extremely sharp and fast to focus and works in full auto focus on a gimbal.

The Sigma 16mm 1.4 must be the best value prime on the market for MFT lenses. I use it in street photos and for videos. It is almost a 35mm full frame lens.

The Panasonic 25mm is a workhorse for small group portraits and ideal lens for movie style video.

The Panasonic 42.5 Nocticron is probably the best portrait lens on MFT and one of the best lenses overall. It is an f/1.2 lens however in terms of actual transmission performs the same as f1/.4 lenses but with better rendering of out of focus areas.

Why not Olympus/Others?

Of course there are equivalent primes from other brands for all focal lengths except the 12mm. They will perform equally and as long as they can go to 1.4 all is good. I use Panasonic bodies so tend to have Panasonic lenses and I buy Sigma since a long time but this is personal. There are tons of reviews on which lenses to choose etc etc but is not my place to do such comparisons. Edit since Olympus giveaway promotion in 2020 I have replaced the Sigma 16mm 1.4 with the Olympus 17mm 1.2 as they are equally bulky. The Oly pro is a fabulous lens but at the RRP suggested not affordable for many.

How about Video?

Even more essential to have fast primes for video as you are constrained in the shutter speed you can use.

Using a 1.4 lens at 1/50 you can shoot several scenes at different ISO

| ISO | Lux | Typical Scene |

| 200 | 125 | Dark day |

| 400 | 63 | Indoors low lit areas |

| 800 | 32 | full overcast sunset/sunrise very dark indoor |

| 1600 | 15 | Near twilight |

| 3200 | 8 | After Twilight dark |

| 6400 | 4 | dark |

| 12800 | 2 | very dark |

| 25600 | 1 | Candlelight |

For my purposes this adequate for reference underwater scenes at 3.5 means I can cover 100 Lux in ambient light in movie mode before turning on the lights.

Conclusion

If you find yourselves with grainy images or videos invest in fast lenses. A lens is the eye of your camera and the sensor is the brain. Think about getting better lenses before investing in a new camera and consider that if you need to go in lower light it is not always true that getting a bigger sensor will help considering the limitation of depth of field so you may want to think about lights.