The previous post on the technical nature of V-LOG has stirred up quite a bit of unset among those people that advocate the use of it as a preferred gamma for video capture. In this post I will show some data point to help you make an informed decision based on what you are planning to shoot in your video project.

Basics of Display Gamma and Dynamic Range

First of all a bit of background on gamma curves. The standard video gamma is based on a correction factor of 0.45 as screen decode it with the reciprocal value 2.22.

Cinema gamma is historically based on a value of 1/2.6 as projectors decode with a gamma of 2.6.

Today most of our content is consumed on phones, monitors or Tv screens as well as of course cinemas but for the purpose of this post I will assume we do not have a real ambition to project in cinemas.

To give some ideas of the dynamic range of the display consider those values

| Phone (HDR) | 9+ |

| Computer Monitor | 10 |

| Tv (1886 HDTV) | 11 |

| HDR Tv | 13+ |

| Projector | 16+ |

It is important to understand how the content we produce will be consumed when we capture our source material as otherwise our video pipeline may be suboptimal.

Mobile phones and tablets are now the predominant platform to consume content and looking at the table above this means that in terms of dynamic range there is not a high requirement. In addition phones and computer monitors may also not be particularly accurate in terms of colour rendition.

HDR content, due to lack of widespread compatibility, is growing on mobile phones but remains a product for high end platforms such as HDR TVs and Projectors.

It follows that content that will be displayed at the best quality on the most common platforms has pretty limited requirements in terms of dynamic range and other qualities are perhaps more important such as sharpness, low amount of noise, colour rendition and delivery of what looks high contrast on a limited contrast medium.

A further obvious consideration is that standard rec709/sRGB video is limited to 10 stops of dynamic range in the display (contrast ratio 1000:1) while new TV sets aligned to BT.1886 can display 11 stops )(Contrast ratio 2000:1). So no matter how you acquire if you end up in rec709 the dynamic range is limited and it becomes more important to accurately capture tones and colors.

Gamma Curves for Capture

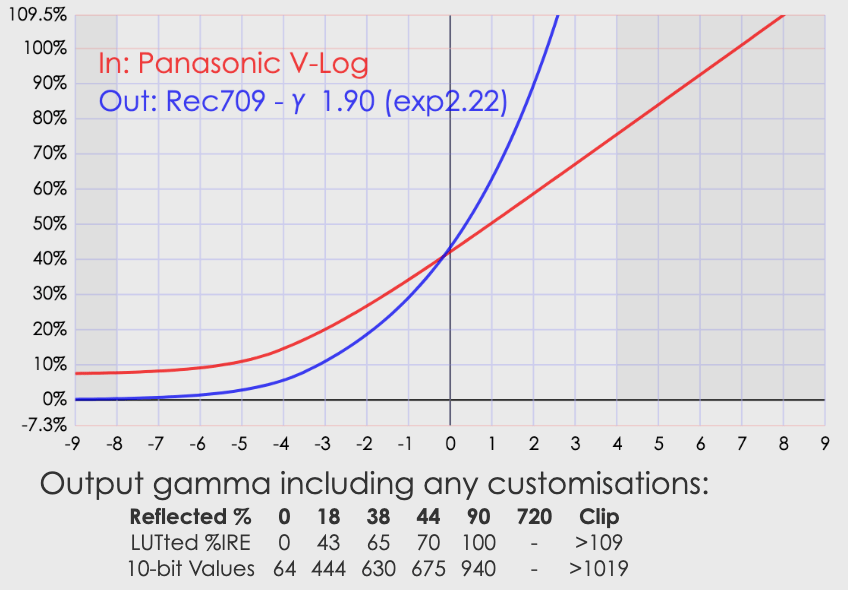

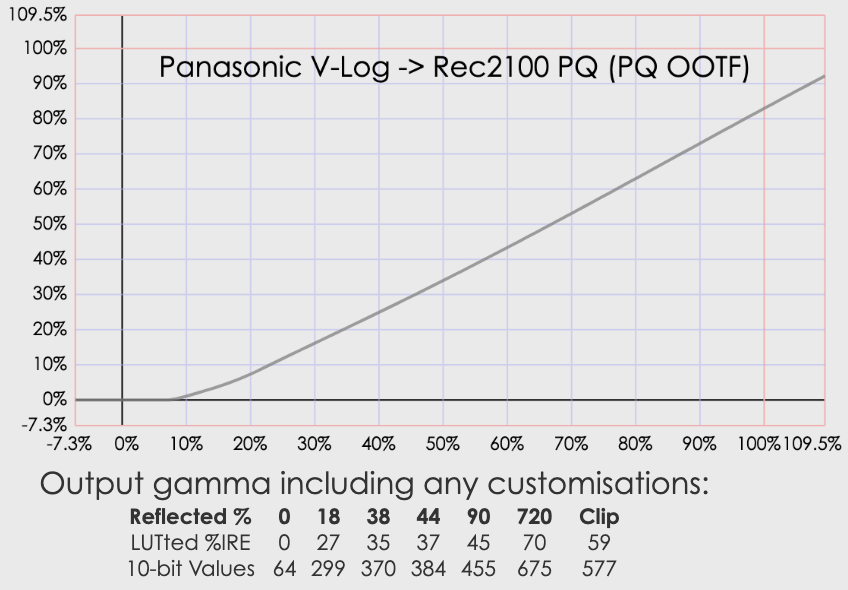

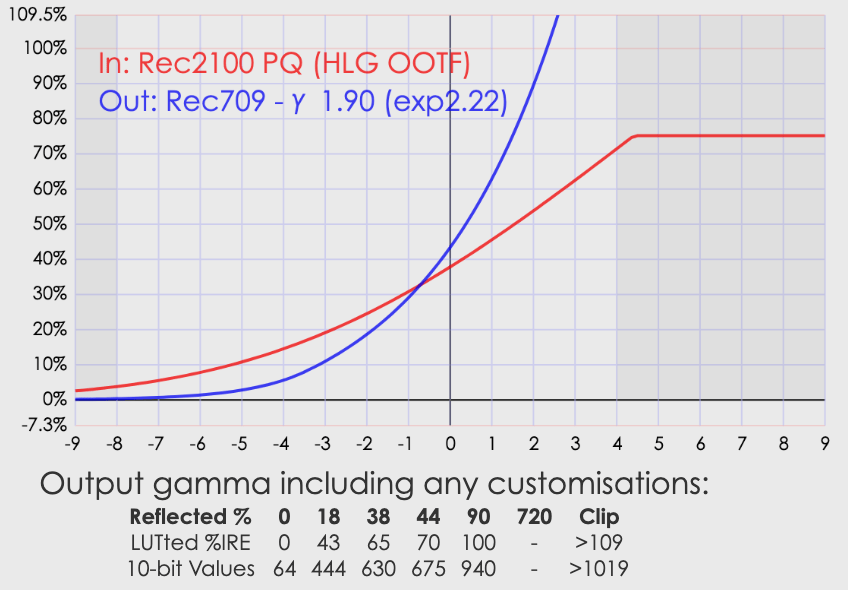

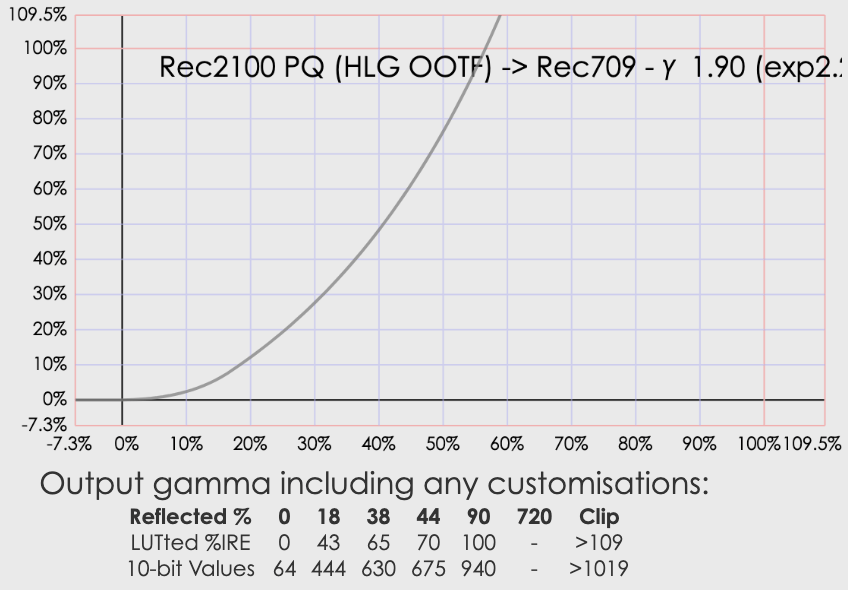

As explained capture gamma is the reciprocal of display gamma and therefore majority of cameras capture a standard video gamma (0.45) or in some cases an HDR gamma (logarithmic). I do not want this post to become a deep dive on HDR video of which I have written enough however to stay on course I want to compare traditional gamma (non linear) and log gamma.

In the following graph you see the bit codes output vs input of a standard video gamma (1/2.2) a cine gamma (1/2.6) and a log gamma (v-log).

In broad terms you can see that a video gamma produces an output of 3865 vs 16383 bits, a cinema gamma sets at 3166, while a Log gamma can take all 14 bits of data and still be within bit value 960.

It follows that to store the whole set of values read at 14 bits into a 10 bits container a standard gamma needs to do some scaling while a log gamma does not need scaling to fit into a 10 bits container. This also explains why exposure values in log are 2 stops higher than standard video for a 14 bit range, while for a 12 bit range the offset is one stop. As the meter is using a standard RGB gamma all values are rescaled back.

You notice that at no point here I have made references to dynamic range. The camera dynamic range is solely related to the maximum well capacity vs the read noise and it does not relate to the gamma curve being used, however the different compression of the gamma curve have an effect on how tones are mapped and on the perceived dynamic range.

Camera Dynamic Range vs Display Dynamic Range

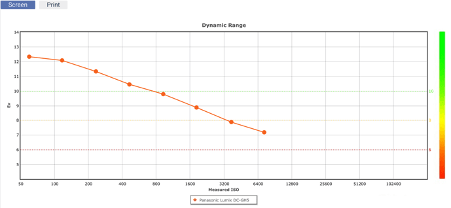

The overall camera dynamic range influences what you can do with your content and if it is worthwhile to produce an HDR version or not.

If we compare the previous table of display dynamic range vs camera dynamic range and we focus on nominal values (SNR=1) and photographic (SNR=20) we can see what device we need for our purpose.

| Camera Type | DR | PDR (SNR=20) |

| Compact | 12.9 | 9.8 |

| MFT | 12.3 | 10.3 |

| APSC | 14 | 11.1 |

| Full Frame | 14 | 12.2 |

We can see that if all we need to do is to output on a mobile device or a computer monitor smaller sensor are adequate, however for HDR production larger format are preferred. Obviously we can stretch SNR to lower values and this will upgrade the above table of 1 stops or so but not change our reasoning substantially.

Gamma curves vs Bit codes mapping

The various gamma curves have a different distribution of tones (bit values), in this table I compare a video gamma vs cinema a reduced 12 stops log and a full Vlog for a full frame sensor camera.

For the purpose of this comparison blacks are bit codes in the lowest 10%, Shadows are up to 18%, midtones up to 75%, highlights up to 90% and whites above. Blacks and whites do not have color information but just brightness while shadows, midtones and highlights contain respectively dark, medium and light hues.

A standard video gamma has over 45% of midtones, those are the colors and tones with intermediate values so produce softer tones. Shadows are just above 35% with highlights under 10% and blacks and whites around 5%.

If we look at a cinema gamma we can see that shadows are now predominant and very close to the midtones, highlights, whites and blacks are compressed.

V-LogL (12 stops DR 12 bit implementation) has the largest range in shadows, midtones are compressed around 50% compared to a video gamma, blacks are subtantially more than a video gamma, and while highlights are compressed, whites are super whites are greatly expanded.

Full VLog is dominated by whites that make 38% of the bit values, Shadows are at 32% with midtones now under 20% and highlights compressed. Blacks remain expanded.

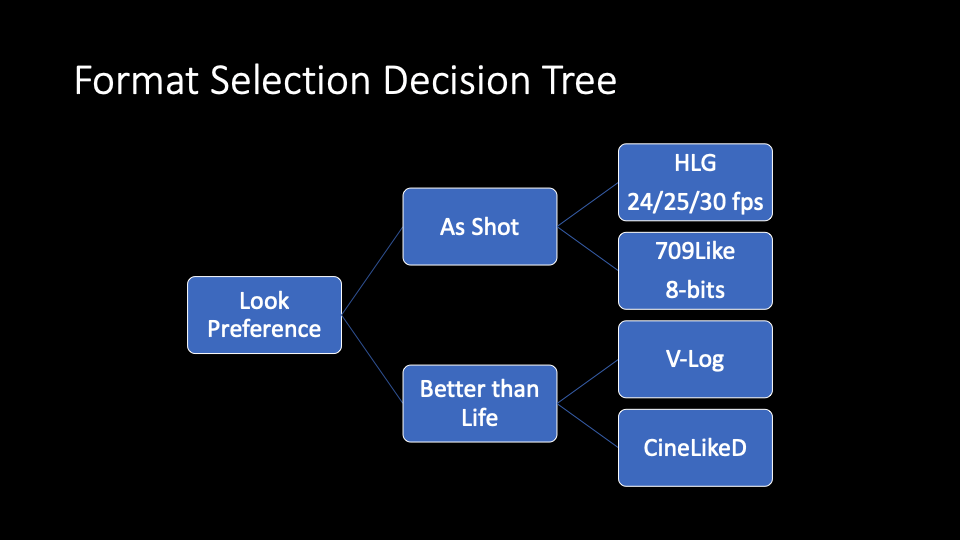

Choosing a Gamma Curve for your Video Project

Our decision tree starts from the content which determines the device we need. Once we have a device capable of a given dynamic range we can make appropriate choices in terms of gamma curve.

Broadly speaking compact cameras and micro four thirds do not have enough device dynamic range at sufficient level of SNR to justify a high dynamic range gamma. There are some very specific exception where this may be worth it (Panasonic GH5s) but in general terms a standard MFT camera for photography should be limited to video or cinema gamma for optimal results as the dynamic range is limited and compression is not required.

If you own an MFT camera your choice is between a video gamma and a cinema gamma. Depending on the look you want to achieve you may choose one or the other. Video gamma has generally more contrast (more blacks and highlights and whites) while Cine gamma has a balance between midtones and shadows but not strong blacks and whites giving overall a softer look.

If you own a full frame or apsc camera you have more options which means you need to think more about the gamma curve to be used. HDR content requires a log curve you can then decide to use a cinema or video gamma if you do not want to output HDR or want to achieve a different look. It is important to note that log gamma have lots of bit values in whites and super whites and those do not exist in many typical scenes.

Scene vs Dynamic Range

While the current effort of camera manufacturers is to promote high dynamic range the reality is that in most cinematography situation you use devices that reduce contrast and therefore dynamic range (think about pro mist filters).

The DR of a scene can be evaluated looking at the histogram. This is of course influenced by the gamma curve so it is important to do this evaluation taking a photograph not video.

The following are example of scenes with the underlying histogram.

It may be useful to see the effect of LOG using the LUTs in photoshop on the raw data

The example above shows that a significant number of midtones have been lost in the conversion with no DR benefit as the scene essentially lacked it.

Conclusion

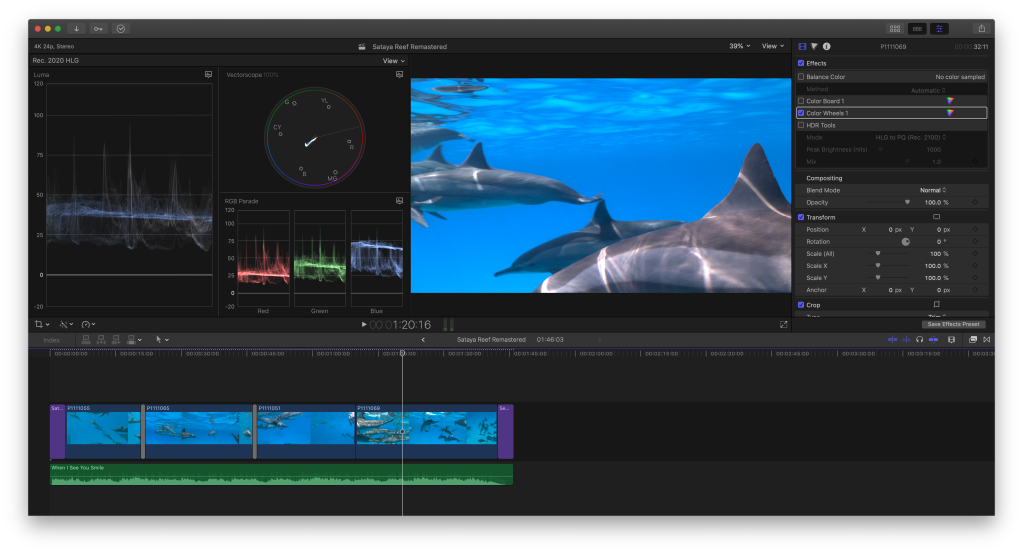

For underwater video purposes as the water reduces contrast and smooths highlights I would not recommend shooting log or HDR with the exception of very specific scenarios. Likewise if I am shooting a v(ideo)log or an interview there is no requirement for extra dynamic range and log compression is not required.

Outdoor scenes especially in bright conditions, snow, are appropriate for HDR and should be shot with a log format assuming of course the luminance of the scene is not being reduced with ND filters or similar.

Events like weddings can have challenging conditions with a mix of low contrast indoor and bright outdoors with the bride typically dressed in white so in effect those can be very demanding on the equipment but you need to bear in mind that if your delivery format is just HD video the benefit of log gamma are greatly reduced and extensive work may be required to bring colours back in check, always account for the limitations of your equipment as well.