In 2018 I wrote the original article as I had acquired the GH5 and I was faced with a ton of non-sense on which format to use when I was shooting video. With the S series software stack Panasonic has made some changes to the options available and I thought it was about time to refresh the original article. As Before I will focus my analysis on 4K video and ignore other formats. This time I will be looking at the NTSC standard of 29.97 and 59.94 frames per second. This is simply because today majority of content produced by Panasonic consumer digital cameras is consumed online and all computer screen work at 60 Hz refresh rate so shooting anything different than 30 or 60 will result in choppy video. This presents some challenges if you are in a PAL zone and are shooting under artificial lights however for the purpose of this article I want to just ignore this issue, obviously you could shoot 24 fps and hope in a 24 – 30 conversion which is scatty of course. For simplicity I will refer to 30 and 60 fps and not exact values.

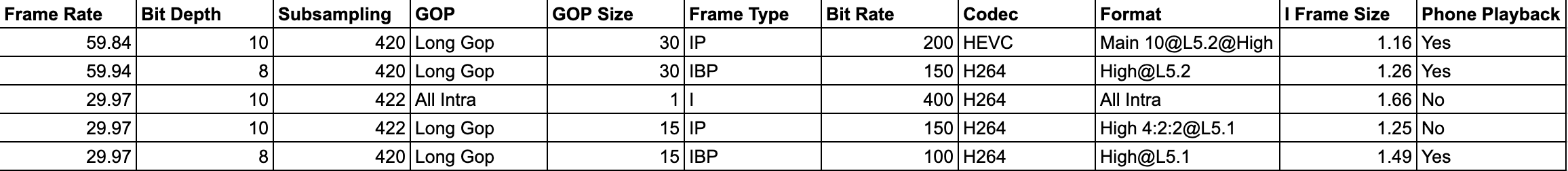

Today we have 5 settings for UHD

- 200 Mbps 420 10 Bits Long GOP 60 fps

- 150 Mbps 420 8 Bits Long GOP 60 fps

- 100 Mbps 420 8 Bits Long GOP 30 fps

- 150 Mbps 422 10 Bits Long GOP 30 fps

- 400 Mbps 422 10 Bits All-Intra 30 fps.

The last option is only available on the GH5 series and on the S1H. The first option is only available on the S series and the GH5M2.

Long GOP vs All Intra

The difference between Long GOP and All Intra is that in the Long GOP what is encoded is a group of pictures (GOP) and not separate individual pictures.

Within a Group of Pictures there are different type of frames:

- I (Intra coded) frames containing a full picture

- P (Predictive coded) frames containing motion interpolated picture based on a prediction from previous frames

- B (bi-predictive coded) frames containing a prediction from previous or future frames

It is important to note that frames are not stored sequentially in a GOP and therefore the GOP needs to be decoded and the frames reordered to be played, this requires processing power.

The reason why H264/HEVC is very efficient is that within a group of picture there is only one full frame and the rest are predictions clearly if the prediction algorithm is accurate the level of perceived quality of long GOP is very high and similar to All-Intra clips.

This is the reason why comparing All Intra and Long Gop using static scenes or scenes with repetitive movement that can be predicted very accurately by the codec is a fundamental error.

So which format should you choose?

In order to understand the workings we need to dig deeper into the structure of the GOP but before doing so let’s evaluate the All-Intra codec.

AVC All-Intra explanation

This codec records at 400 Mbps so with 30 fps this means circa 13.4 Mbits per frame or 1.67 MB per frame and there is no motion interpolation so each frame is independent from the others. The implementation of All-Intra of the GH5 does not make use of CABAC entropy but only CAVLC coding is used, this makes the resulting files easier to read and to edit. The idea of All intra is that you don’t require powerful hardware to edit without conversion in an intermediate codec. However based on my experience this is not entirely through and you need a decent GPU to play it back and edit real time without issues.

If you consider a Jpeg image of your 3840×2160 frame on the GH5 you see that it stores around 4.8 MB per image because there is no chroma sub-sampling so if you wanted to have exactly the same result you would need to use ProRes 4444 to get a comparable quality (this not even taking into account that Jpeg are 8 bits images).

Video uses chroma sub-sampling so only part of the frame contain colours at a given time. Apple in their ProRes white paper declare that both ProRes 422 and 422 HQ are adequate to process 10 bit colour depth and 422 sub-sampling however they show some quality differences and different headroom for editing. If you count 50% for 4.2:0 sub-sampling and 67% for 422 you get around 2.34 MB and 3.5 MB frame sizes that correspond to ProRes 422 and ProRes 422 HQ individual frame sizes.

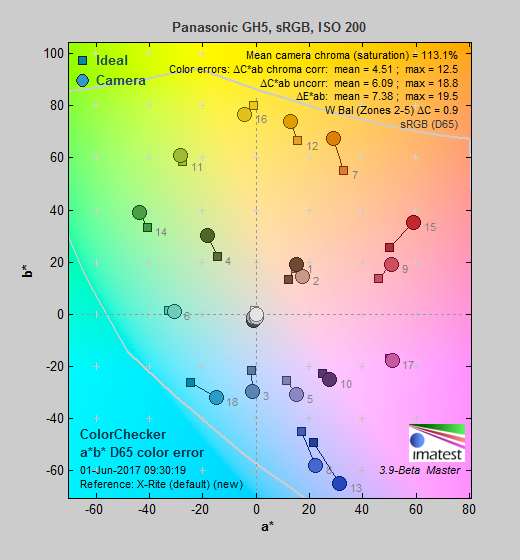

it would appear that All Intra 400 Mbps would fall short of Apple recommended bit-rate for 422 10 bit colour however practical tests show that AVC All intra at 400 Mbps is perceptually identical to ProRes 422 HQ and uses much less space. We also did some SNR measures time ago with the friend Paal Rasmussen and we did not find significant improvements shooting ProRes 422 HQ vs All-I on card.

Long GOP Codecs

Coming back to the other recording quality option we still need to evaluate how the various long GOP codecs compare relative to each other.

In order to fully understand a codec we need to decompose the GOP into the individual frames and evaluate the information recorded. If you look on Wikipedia it will tell you that P frames are approximately half the size of an I frame and B frame are 25%. I have analysed the Panasonic GH5M2 clips using ffprobe a component of ffmpeg that tells you what is exactly in each frame to see if this explains some of the people claims that there is no difference between the settings.

Link to Panasonic on the H264 implementation is here: documentation

There is unfortunately no documentation of the HEVC implementation that I have found to date.

200 Mbps 420 10 Bits Long GOP 60 fps Analysis

An analysis with ffprobe shows a GOP structure with N=30 and M=1 where N is the length in frames of the group of pictures and M is the distance between I or P frames.

This codec does not have B frames but only P frames.

Analysing a set of I frames of a fixed subject at 60 fps resulted in a frame size of 1.16MB for the I frames. This value is quite low however we need to understand that HEVC is much more efficient than H264.

I shot this test video time ago comparing the recording of this codec with a Ninja V in ProRes 422 HQ. As you can see no major differences however I have not pushed the grading in the clip.

The speed ramps in this video use this codec

150 Mbps 420 8 Bits Long GOP 60p Analysis

An analysis with ffprobe shows a GOP structure with N=30 and M=3 where N is the length in frames of the group of pictures and M is the distance between I or P frames.

So each Group of Pictures is made like this

IBBPBBPBBPBBPBBPBBPBBPBBPBBPBB before it repeats again.

Analysing a set of I frames of a fixed subject at 30 fps resulted in a frame size of 1.26MB for the I frames.

One very important aspect of the 150 Mbps codec is that as the GOP is double the length of the single frame rate 100 Mbps codec there are the same number of key frames per second and therefore it is NOT true that this codec is better at predicting motion however the additional frames result in better slow motion performance than what is done in software in majority of cases.

100 Mbps 420 8 Bits Long Gop 30 fps Analysis

An analysis with ffprobe shows a GOP structure with N=15 and M=3 where N is the length in frames of the group of pictures and M is the distance between I or P frames.

So each Group of Picture is made like this

IBBPBBPBBPBBPBBP before it repeats again.

Analysing a set of I frames of a fixed subject at 30 fps resulted in a frame size of 1.49MB for the I frames which is the highest if we exclude All I.

150Mbps 422 10 Bits Long Gop 30 fps

An analysis with ffprobe shows a GOP structure with N=15 and M=1 which means this codec does not use B frames but just I and P frames so the GOP structure is as follows:

IPPPPPPPPPPPPPP before it repeats again.

Analysing a set of I frames of a fixed subject at 30 fps resulted in a frame size of 1.25MB for the I frames.

H264 Codec Ranking for Static Image Quality UHD

So in terms of absolute image quality and not taking into account other factors the Panasonic GH5M2 and S series Movie recording settings ranked by codec quality are as follows:

- 400 Mbps 422 10 Bit All intra 30 fps (1.67 MB per frame)

- 100 Mbps 420 8 Bit Long Gop 30 fps (1.49 MB per frame)

- 150 Mbps 420 8 Bit Long Gop 60 fps (1.26 MB per frame)

- 150 Mbps 422 10 Bit Long Gop 30 fps (1.25 MB per frame)

The 100 Mbps and 400 Mbps codec are marginally different with the 150 Mbps long GOP really far away.

Note that as the technology is different I cannot directly compare the new 200 Mbps codec however based on visual impression and ability to grade I would recommend this over the 150 Mbps 420 8 bits

Conclusion

If you have a camera that has the 400 Mbps All Intra this remains the best format to use. V90 cards have dropped in price and are now available up to 256 GB. Unfortunately this option is only available on the GH5 series and on the S1H.

If you have a camera that does not have the All-I you can of course purchase an external recorder that in some cases will allow you to shoot RAW however this is not necessarily going to give better image quality and will definitely extend your processing time.

My revised advice, if your camera does not have the ALL I and you don’t have an external recorded, is as follows:

- Use the 100 Mbps Long Gop codec it is very efficient in the compression and the perceived quality is very good. You need to get the exposure and white balance right in camera as the clips may not withstand extensive corrections. There is a risk with footage with a lot of motion of some errors in motion interpolation that can generate artefacts but this based on experience is not very high.

- Use the new 200 Mbps HEVC for double frame rate it is not hard to process as HEVC 10 bits has hardware acceleration on all platforms.

Generally there appears to be no benefit using the internal 422 10 Bit codec nor the 420 8 bit double frame rate due to the limitations of the GOP structure, in addition the lack of hardware acceleration for H264 10 bits means you will need to convert the files for editing and they do not open with standard programs or load on phones or tablets. The same is true for All Intra but at least you can edit it ok.

To conclude this is a summary table with all key information

Additional Considerations

A certain number of GH5 users have upgraded to the S5, I was one of them until I sold the camera after 1 month of using and after buying a Ninja V. If you are a Panasonic S1/S5 user you need not only to contend with recording time limits but also with lack of codecs on the camera to fully use the potential that it has. You need to add an external recorder to really see the benefits because in real life situations you are not shooting a step chart so the dynamic range is destroyed by compression quality and errors and SNR drops. It would be interesting to test how does the GH5M2 400 Mbps compare with one of the S cameras using the 150 Mbps 10 bit codec but this is not something I did. I would only warn everyone going down that path that you may get less than what you think and you may require additional hardware to get there. Take also into account that S series only shoot 50/60 fps in APSC/Super35 mode and that in full frame mode there is a substantial amount of rolling shutter that makes pans and tilt practically not possible.